Members

Richard Li

Mehrab Bin Morshed

Gregory D. Abowd

Sponsors Generously supported by an Intel SRC grant

Publications

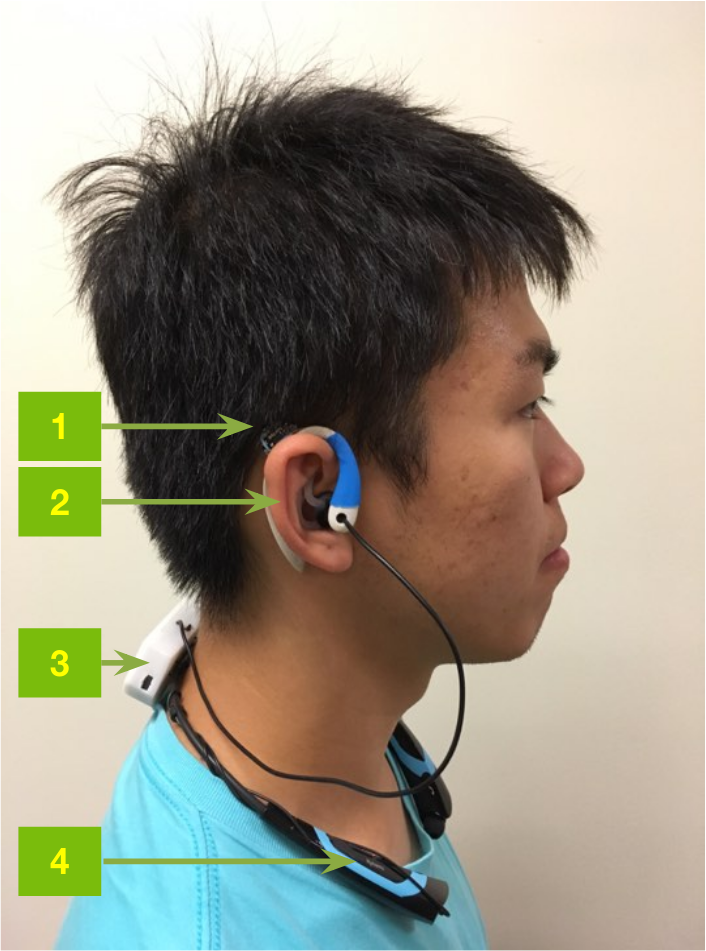

Ear-mounted solution:

IMWUT Vol. 1 Issue 3, 2017

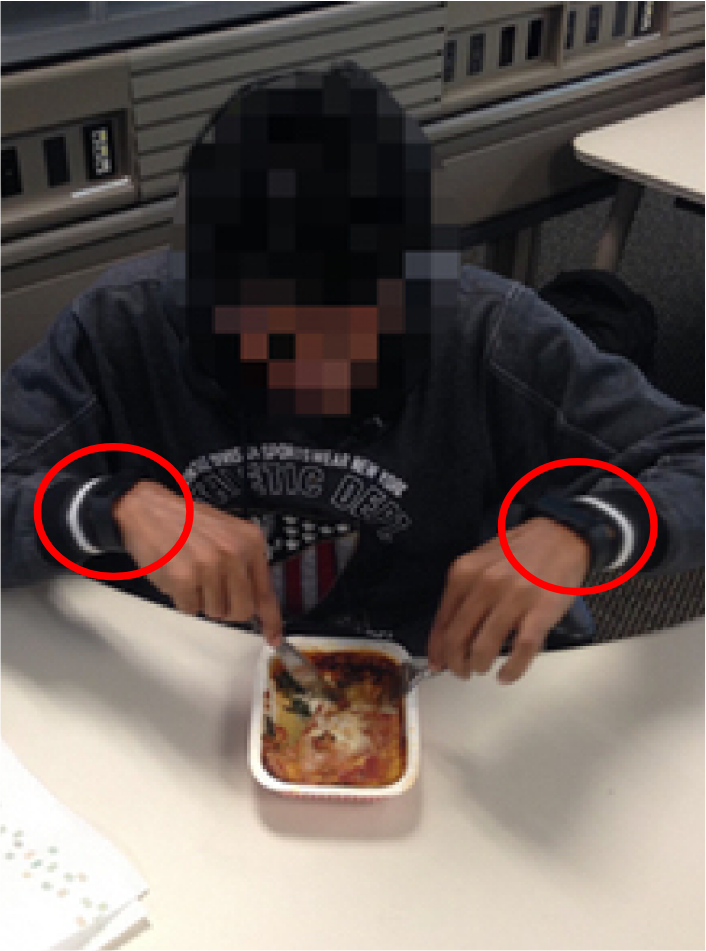

Wrist-worn solution:

UbiComp 2015

Video Demo Video on YouTube

Status Ongoing

Eating Detection: Sensing and Applications

Chronic and widespread diseases such as obesity, diabetes, and hypercholesterolemia require patients to monitor their food intake, and food journaling is currently the most common method for doing so. However, food journaling is subject to self-bias and recall errors, and is poorly adhered to by patients. This project explores the different ways eating episodes can be recognized as well as the potential applications of being able to recognize these episodes.

Commodity Sensing

One option for recognizing human behaviors and activities is by leveraging the devices that users already have, such as smartphones and smartwatches. These devices commonly feature an inertial measurement unit (IMU) for detecting linear and rotational movements. Previous work used the IMUs in smartwatches to detect the motion of bringing food to the mouth for estimating when a user was eating.

See our UbiComp 2015 publication for more details.

Novel Sensing

While using commodity devices might make deploying easier, the sensing might not be appropriate for the phenomenon to be recognized. As a result, a number of novel sensing techniques have been employed to recognize eating. Among these include tracking the deformation of the ear canal with a proximity sensor pointed inside the ear, or measuring the flexing of the temporalis muscle (which connects the jaw with the ear).

See our IMWUT 2017 publication for more details.

Applications

At this point, most of our efforts have focused on recognizing on when eating episodes occur. Once we have this data, there are a number of things to explore: can we then detect what and how much users are eating? How does eating correlate with their mental health? What constitutes healthy eating temporal habits? These are all applications that we are actively exploring in partnership with the CampusLife project.